“Let’s get empirical”; Source: http://xkcd.com/943/

The User-Study Conspiracy Theory

For the most part of my academic life I worked on Pattern Discovery algorithms; perhaps too much, because nowadays I cannot help but to count pattern occurrences everywhere I go. One of those patterns, which I want to call here “The User Study Conspiracy Pattern”, I discovered in recent years during Data Mining research presentations. It roughly goes as follows.

Independent of whether I was at a large-scale presentation during an international conference such as KDD or ECMLPKDD or at a more intimate PhD-defense, I often witnessed a variant of the following dialog during the Q&A part:

Q: This looks interesting, but is this really what users would want?

A: Well, I guess in order to really confirm that we would need to test this somehow with real users.

Q: Yes, I agree. Thank you.

Alright, so much for that. Next question. It almost feels like there is an unspoken agreement:

“Yes, for many of our contributions we would actually need to perform user-studies in order to evaluate them, but… we won’t (and won’t call each other out on this).”

Sounds like a conspiracy theory? I admit, between selective hearing and a general attendance preference to talks that lean themselves to user-based evaluations, there are quite a few reasons for not jumping to conclusions. Anyway, we might have found one (or two) interesting questions here:

“What is the fraction of Data Mining research that could have benefited from a user study, but the authors decided not to perform one?…and what are the reasons for these cases?”

So I joined forces with Thomas Gärtner and Bo Kang in order to shed some empirical light on these two questions. The idea was to perform an online poll, in which we would ask Data Mining authors directly whether they felt that user studies would be beneficial for their own work.

The Poll

The first question we had to answer, when designing the poll, was which authors to invite to participate. While going for an open call on a collection of known mailing list (perhaps even with a request to re-share) would have maximized our reach, we decided that we wanted to target a more cleanly defined population. Since it was March and the ECMLPKDD deadline was approaching, we found that “the ECMLPKDD authors” were a good choice. To turn this idea into an operational definition, we further refined our target group to all authors of ECMLPKDD articles who provided a contact email address on the paper that was still valid at the day of our poll invitation, which was at March 28.

Then, of course, we had to design the content. Since we wanted to maximize the response rate, we made it a priority to not ask for more than 5 minutes of the participant’s time. Consequently, we crafted a minimalist questionnaire focused around the two core questions we wanted to answer: what is the fraction of people who skip potentially insightful user studies and what are their reasons for that? One additional questions that we inserted was about the author’s field of work. The rational here was that, while ECMLPKDD is a clearly defined population, it is a quite heterogeneous one, and we expected results to vary quite a bit, e.g., between Machine Learning researchers who often work on clearly defined formal problems (that don’t lean themselves naturally to user studies) and pattern discovery or visual analytics researchers who often try to develop intuitive data-exploration tools for non-expert users. So we wanted to be able to see differentiated results between those groups.

The literal final list of 3 questions was then as follows:

- Question:

“In which of the following areas have you had orginal research contributions (published or unpublished) during the last two years?”

Possible answers (multiple possible):- “Machine Learning”,

- “Exploratory Data Analysis and Pattern Mining”,

- “Visual Analytics”,

- “Information Retrieval”, and

- “Other”.

- Question:

“During the last two years, was there a time when you had an original contribution that could have benefited from an evaluation with real users, but ultimately you decided not to conduct such a study?”

Possible answers (exlusively):

“Yes”, “No”. - Question:

“In case you answered “yes” to question 2, which of the problems listed below prevented you from conducting a user study?”

Possible answers (multiple possible):- “No added benefit of user study over automatized/formal evaluation”,

- “Unclear how to recruit suitable group of participants”,

- “High cost of developing convincing study design”,

- “High cost of embedding contribution in a system that is accessible to real users”,

- “High cost of organizing and conducting the actual study”,

- “High cost of evaluating study results”,

- “Insecurity of outcome and acceptance by peers”, and

- “Other”.

The Results

Out of the 525 authors, who were invited to participate, 125 responded already within the first 5 days after we opened the poll. We deliberately did not include a deadline in the invitation email and instead simply kept accepting answers until we did not receive any more responses for more than 7 days (which was reached by the end of April). Ultimately, we ended up with 136 respondents, which corresponds to a very solid response rate of 25.9%.

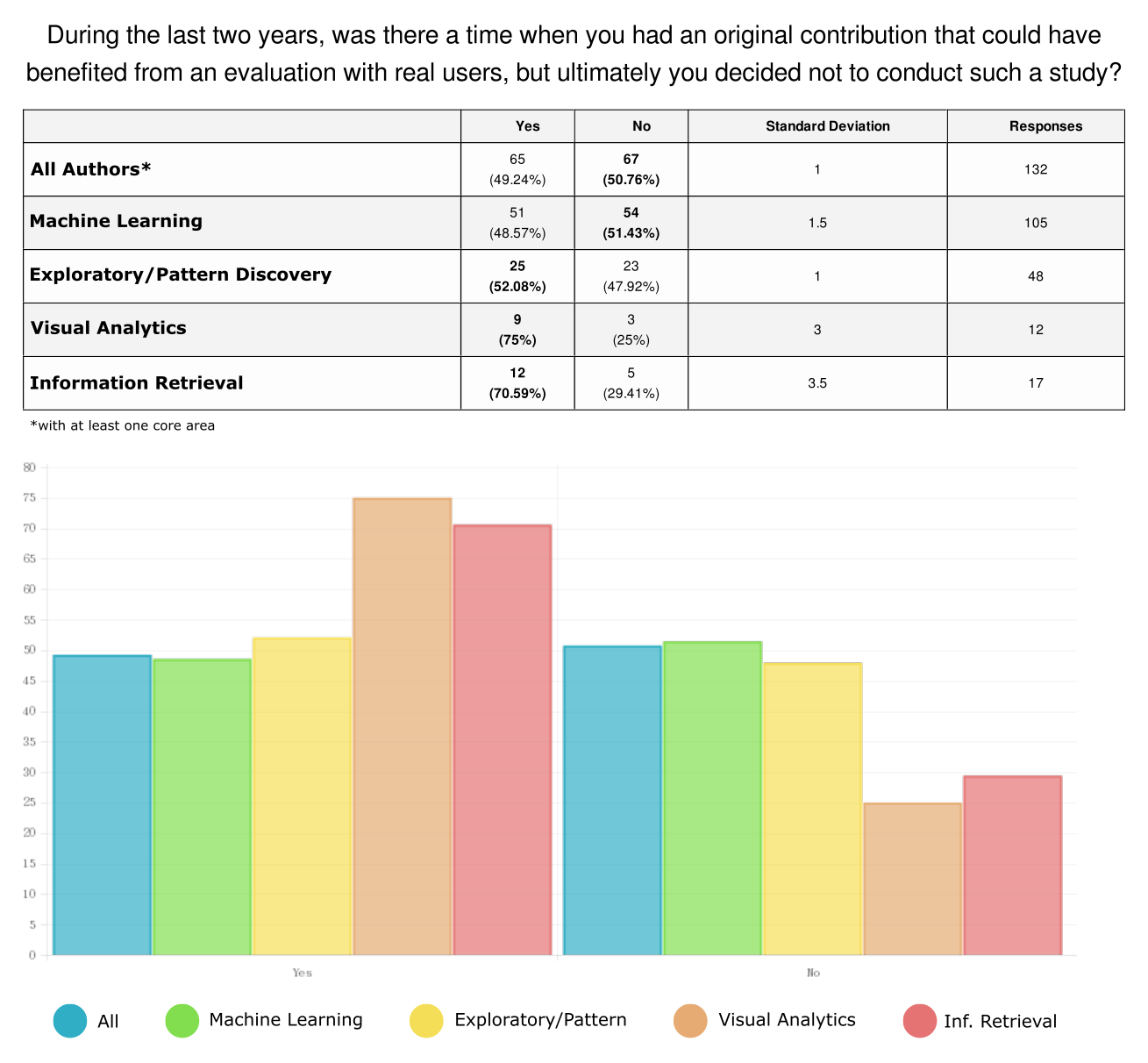

So let’s look at the results starting with the central question: what is the amount of research that is not backed up by a user study although it would make sense to do so? The following image contains the results for this question (differentiated by the different sub-fields queried in the first question). Note that, for this summary we filtered out 4 responses that did not give any of those 4 core areas as their area of work and are thus not really representing data mining research.

So throughout the whole set of respondents the answer was “yes” in almost 50% of the cases. Restricted to authors that stated Machine Learning as one of the areas of their contributions (which is by far the dominating subject area), the “yes”-rate was with 48.57% slightly less, but altogether surprisingly close to the group of Exploratory Data Analysis/Pattern Discovery (52.08%). In the smaller groups of Visual Analytics and Information Retrieval “yes” was the dominant answer with 75% and 70.59%, respectively. Note that the different subgroups are overlapping because multiple research topics could be stated.

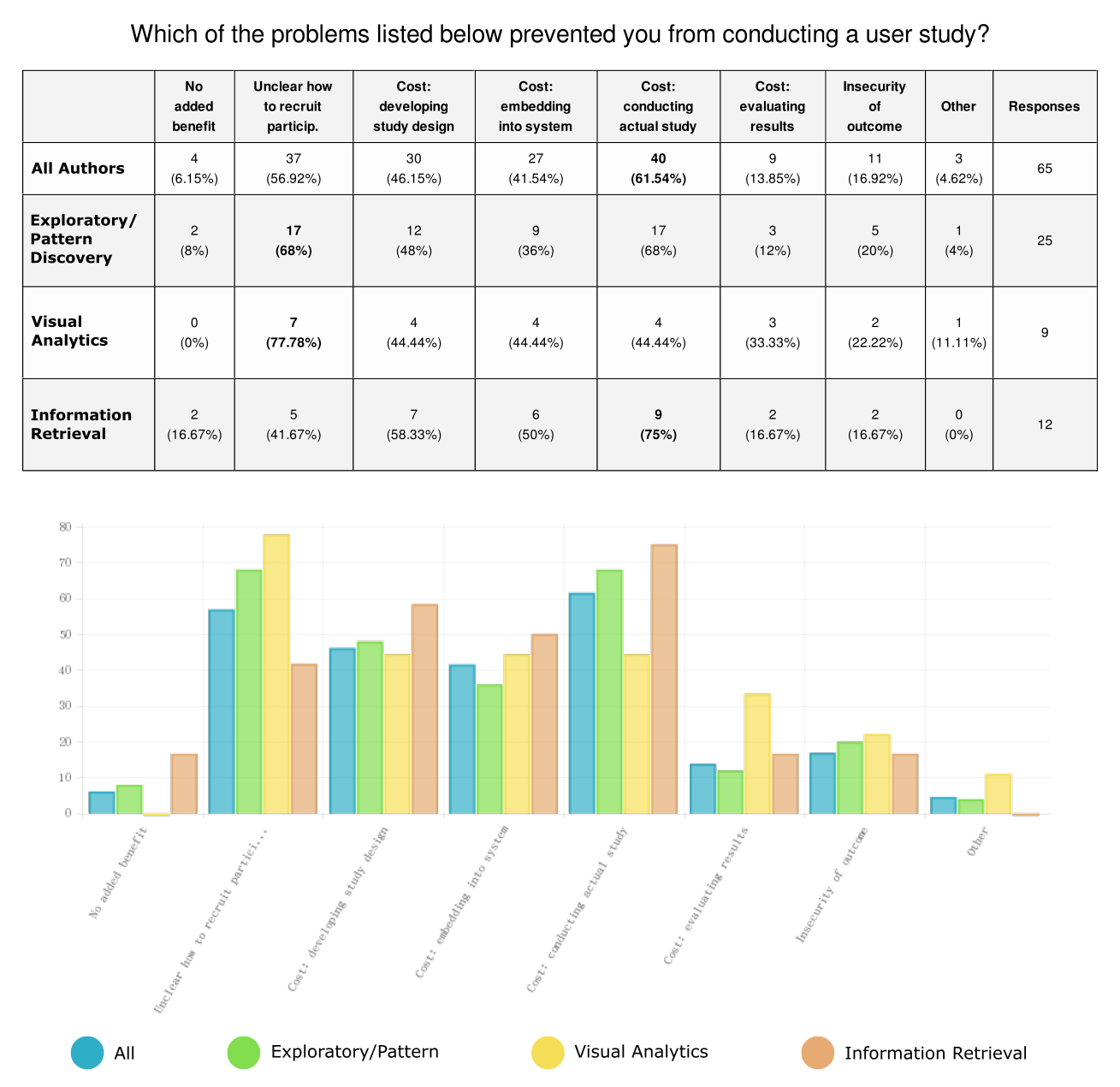

With this substantial number of “yes”-answers across all the different sub-populations, let us take a look at the remaining question: what are the reason for the reluctance of authors to do something that, according to their own assessment, could be beneficial for their work? In the following image we can see the distribution among the different reasons for the complete population of “yes”-responses as well as individually for the three sub-populations that had a “yes”-rate of more than 50%.

Answers to Question 3: most authors worry about recruiting participants and cost of conducting the study.

So the dominant individual reasons were “cost of conducting the actual study” (61.45%) and “unclear how to recruit participants” (56.92%). There are some interesting shifts between the sub-populations. Apparently, Pattern Discovery and Visual Analytics researchers worry more about from where to recruit study participants than Information Retrieval researchers (which might be due to the fact that the first two tend to work more on systems for experts, which can not be tested by a general audience). Also, the Visual Analytics group worries notably more about “the cost to evaluate the study results” (which might be due to the fact that performance metrics are harder to establish in this area).

Returning to the big picture, it should be noted that the aggregation of all “cost” items was chosen 98.5% of the time. That is, almost unanimously authors who did skip on a study opportunity provided a cost aspect as at least one of the reasons. Finally, an important observation is that the first reason “no added benefit” was stated only a small fraction of times (6.15%).

The Bottom Line

So what to take from all this? I believe that even if we consider the rather defensive phrasing of Question 2 and acknowledge a certain gap between responding ECMLPKDD authors and “all of Data Mining research”, the data presented here allows a rather clear interpretation:

A substantial fraction of active Data Mining researchers would potentially be interested an adding an empirical layer to the support of their work if doing so had a lower cost/benefit ratio.

Now one could argue for increasing the benefit part through the negative incentive of punishing those papers that would lend themselves to an extrinsic empirical evaluation but are lacking one. However, this would require a lot of players to change their behavior without having an individual incentive. So from a game-theoretic point of view there is some doubt that this approach is actually possible. Even if it would work, it could lead to a somewhat unproductive division between empiricists and those that fight for their right to abstain (conspiratorial or openly).

Instead I believe that it is a more promising approach to try to reduce the costs (and needless to say that I hope realKD and Creedo can make a contribution here). If it is more affordable to design, conduct, and evaluate studies with real user, then those authors that feel the most need to do so can go ahead and test their theoretical assumptions from a different angle. These theoretical assumptions, once properly evaluated, can then be used by other authors in order to develop improved methods within purely algorithmic papers. This way, we could establish a cooperative culture, in which individual pioneers can effort to go to the extra mile and to successively create an empirical pillar of support for the joint research activities of the community as a whole.